The third entry from Mao Yuan Yi’s ChaoXian Shifa 朝鮮勢法 (Korean Stances) we will look at is the “ban tou” or Leopard Head Strike. The technique is familiar to a great many arts and find a number of iterations within the Wubei Zhi. Ban tou is related to two previous entries, Ju Ding and Zuo Yi. It’s described use runs very much in line them. The three together represent one of the most common and useful skills with the long sword; to parry and riposte from a high guard/ position.

4.

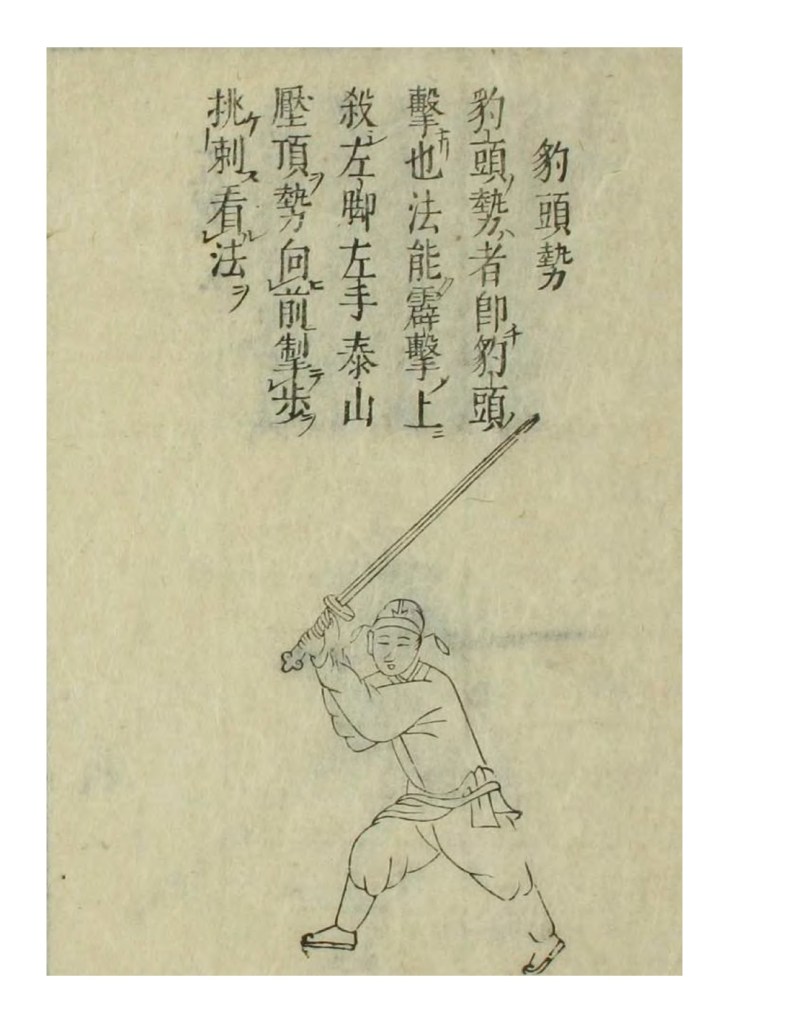

豹頭勢者即豹頭擊也

法能霹劈擊上殺

左脚左手,泰山壓頂勢

向前掣步挑刺

看法

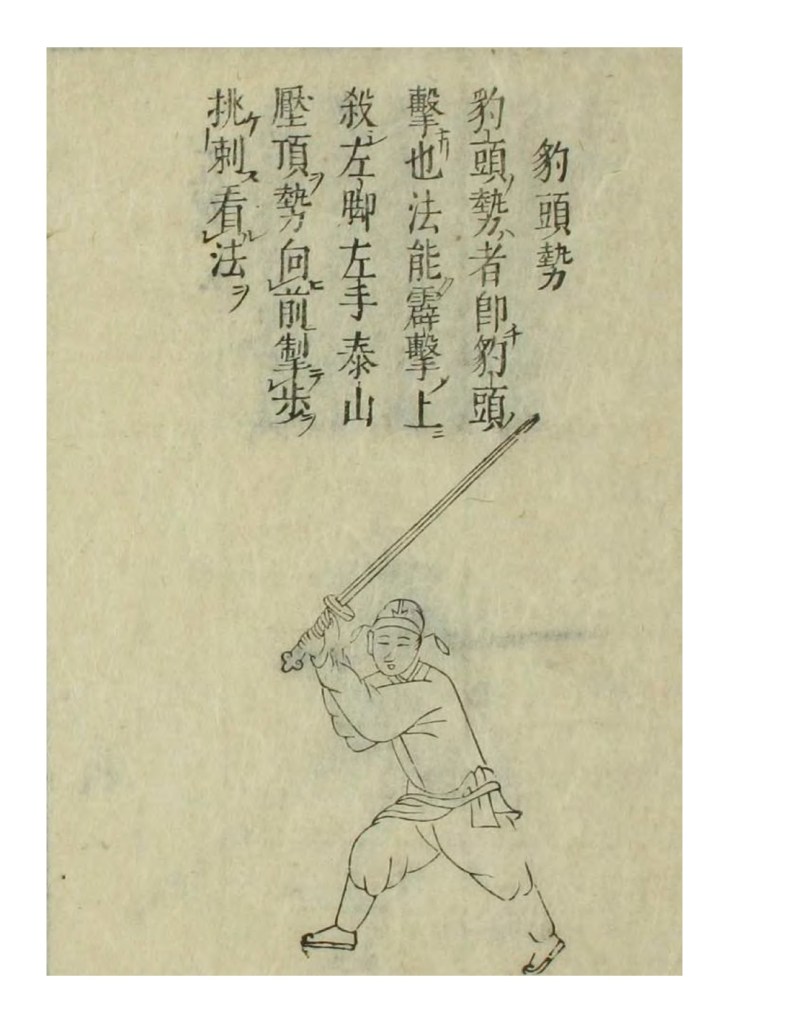

The “Bao Tou” guard represents the “Leopard Head” strike.

It is able to deliver a powerful blow down on to an opponent.

With your left foot and left hand, assume the posture “Crushed by Mt. Tai”.

Take a quick step forward and perform a “Tiao” (flicking) cut with the tip of the sword.

See illustration:

Commentary:

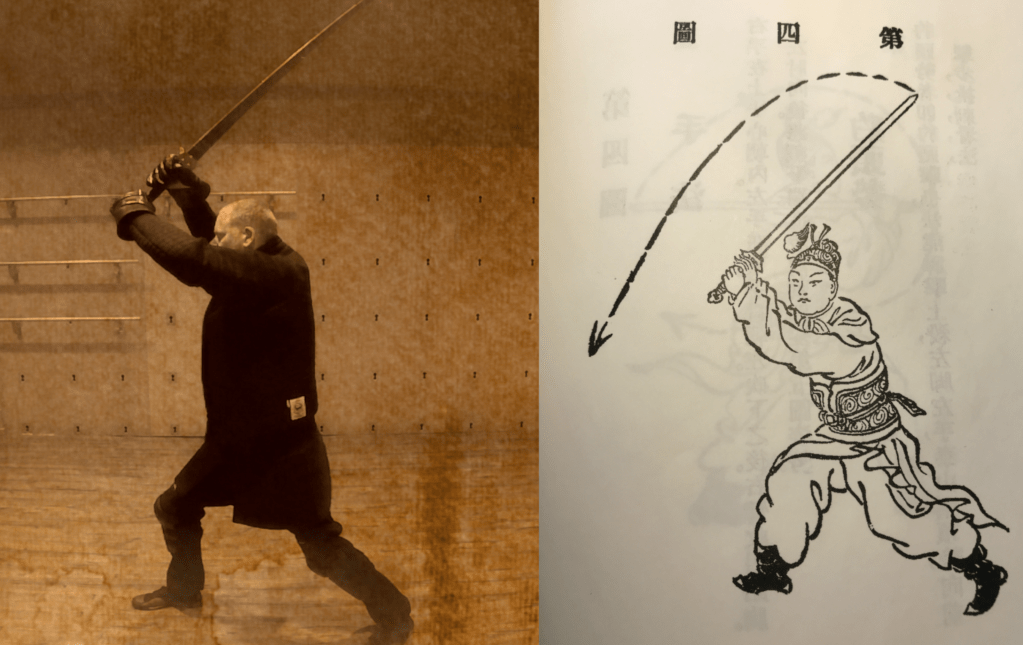

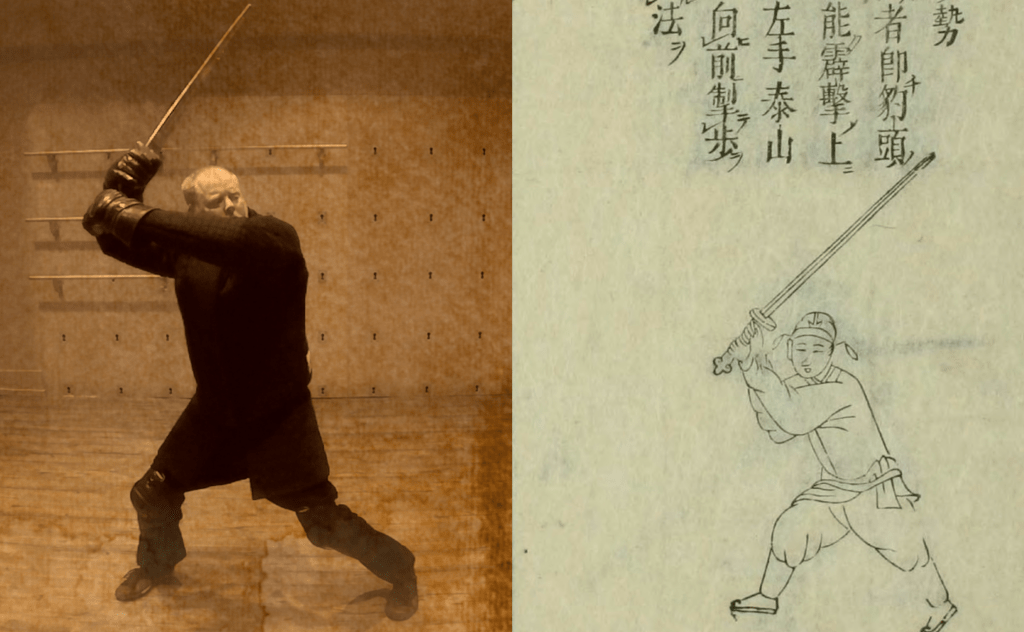

This strike seems fairly straight forward at first glance. It describes a powerful downward blow followed by a flick upward. The illustration depicts a high guard position that supports this interpretation. A simple high guard is a very useful position and there are few high guards held in this text.

The downward blow is simple enough. The entry about “tiao” as the follow up is subject to some interpretation. The important thing to remember with this technique is that it is a tip attack and one should move the blade as if there is resistance at the very tip. This creates a flicking type of motion and hit.

In the “Gentleman’s Sword” a Republic era reprint and commentary on the ChaoXian ShiFa by Jin Yi-Ming, the technique is described this way:

第四架豹頭勢:右手在上手。 心朝內左手在下。手背朝下。砍下之後。右劈收厄貼胸。左肘向後。將劍平端。進步前刺。即變第五圖婆勢。

Leopard Head Strike: Right hand is on top. The palm faces inside with the left hand below. The back of the hand faces downward. Chop downward then chop right and hold the the handle to the chest. The left elbow points behind. The sword is held level. Step in and stab forward. Quickly transition to the fifth illustrated technique.

Jin Yi-Ming: Gentelman’s Sword: Junzi Jian.

It is fairly clear to me that the description of Jin is intending to transition into the next position in the text- the “Exposed Belly Stab”. This basic sequence is the dominant interpretation of this technique. It shares many similarities with the application of the Left Wing Strike yet, is a separate entry.

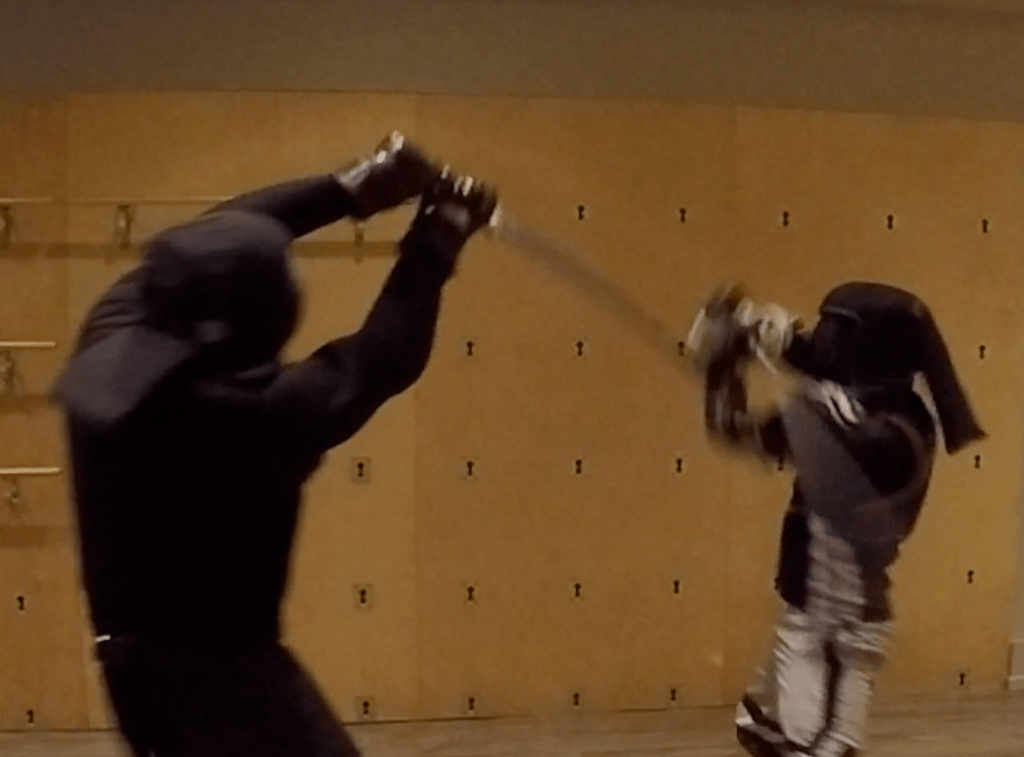

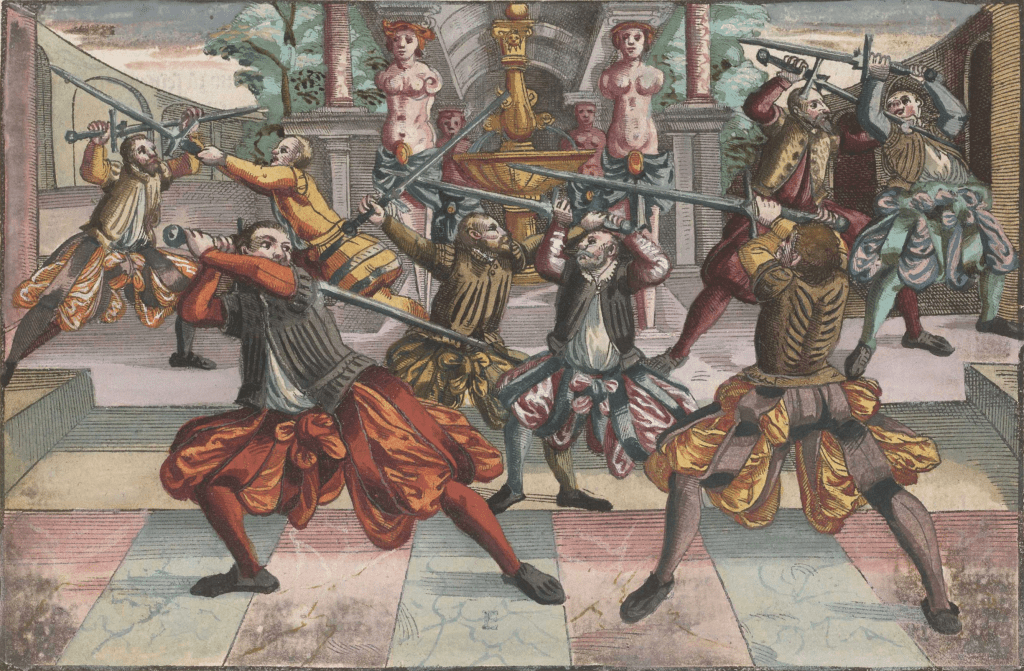

This is a very common and, one could argue, intuitive technique. Holding the sword above the head with the blade pointed backward or away from the opponent readies a downward strike of considerable power. It is, of course, a common go-to position and strike thrown in all manner of two handed sword systems. The applications of the position and strike are equally obvious and uncontroversial. If you watch any sort of match like Kendo or HEMA longsword, this strike is well represented.

In German longsword, the position is called “Vom Tag” or “the roof”. And it is also accompanied by a “half “ version from the shoulder. Taking a look at Kendo, Jodan no Kamae is the analogous position. In all these martial arts and sports, this position is used frequently. The simplicity of holding a sword high and letting it drop its so universal it almost does not to need to be pointed out.

Hand and Foot

The major issue with the Leopard Head Strike entry is the third section describing the hand and foot. Most interpretations of this posture have the swordsman holding the sword hilt up by the head and looking forward past the pommel. With this assumption, that would put the right foot forward and the left hand in front. But, the text says it is the left foot and hand that are moving/ or in front. This problem occurs in several other entries and the exact translation of this section is still elusive.

We have discussed this previously in the overview of the text. But compounding this particular entry are the imprecise drawing style, the sparse text and the exclusion of an opponent one is responding to to give some context of the movement. The gaze of the subject is not clear. It appears that he is looking forward under the weapon, but his gaze is more to the viewer (although his eyes do appear to be gazing toward his hands). We know that profiles of certain positions are used in the text, so the fact that the drawing’s face in not in profile seems to be intentional. But, was the foot position correct and why does it not seem to match the text? The text could be wrong, this is a widely known occurrence in Ming Texts, and should read “right foot left hand”. Or the drawing could be wrong, with the left foot being forward (a more common expression) and the legs being drawn imprecisely.

However, if we switch our own expectations of where the opponent is in relation to the image, we get another related, yet workable technique. If this guard is being held with the sword behind the welder, who in turn is looking back over his shoulder somewhat, this becomes another useful position that is found in several other martial art systems from around the world. In German Longsword it is the “Wrath Cut” or “Zorn Hau”. Italian longsword has the similar “Posta di Donna” or Women’s guard. Both of these examples demonstrate that this position is at least a familiar one with two handed swords.

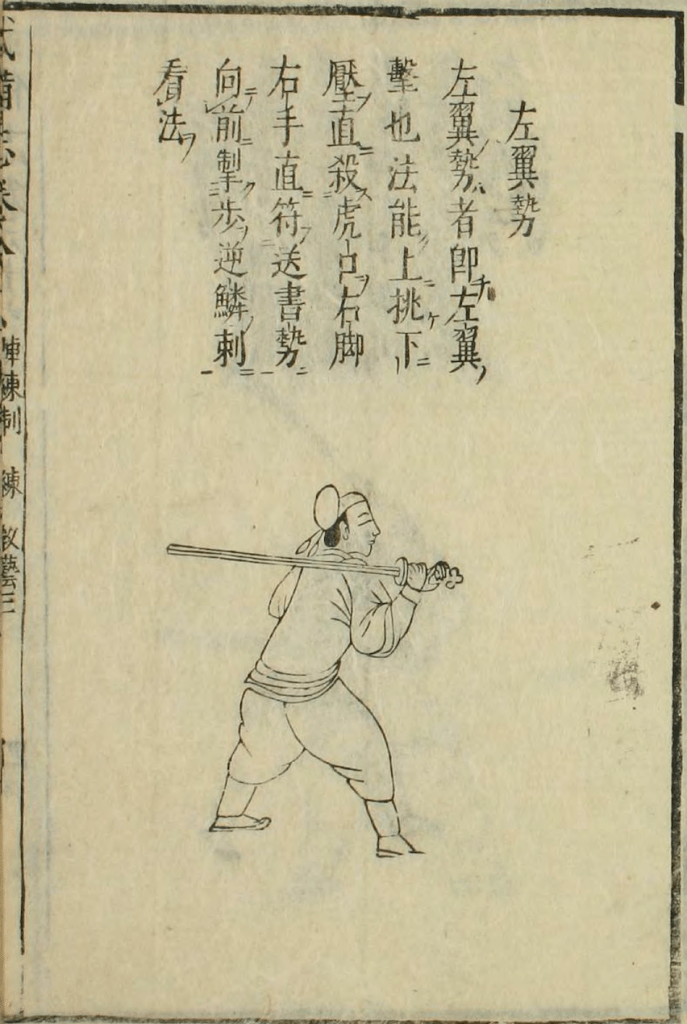

The two examples do have some differences. Both European examples hold the blade low over the back. There are some positions in the Korean Stances that can be seen to do this as well. The Left Wing and Right Wing Stances can be interpreted to be analogous to Zorn Hut and Post Di Donna. But, if we take into account the footwork of left hand left foot, this twisted high guard with the sword held back does offer some interesting possibilities.

Performance and application

The two interpretations presented here are not the only ones. But, they do tend to have a lot of commonality with other systems. Using them in freeplay, they are fairly high percentage as far as success. There is also considerable exchangeability between similar techniques.

Interpretation #1

The simplest example of the importance of a high guard. Delivering powerful blows down on an opponent is perhaps the most intuitive thing in the world. However, it is not as easy to throw them effectively and land them with regularity. The movement is large and the position held is often extended. Not exactly a subtle stance. It does not take Aldo Nadi to figure out that a person holding such a guard will more than likely strike down toward the head. If it lands on the target, great, but it will almost invariably incite the opponent to raise their guard and defend their head. This of course can open new targets, put them on the defensive, or be used for the second intention. The mechanics of the technique and its structure are its advantage and disadvantage at the same time.

German Longsword: The Roof

Joachim Meyer, in his excellent and detailed longsword treatise, talks about the position’s versatility in fighting.

The Guard of the Roof, which is also known as the High Guard, is explained as follows. Stand with your Left Foot forward, hold your Sword high over your head so its point is directly above, consider the figure on the left of the image above, illustration C, which indicates how one can operate from above, that all strikes can be fenced from the Roof or High Guard, which is why this Guard is named the Roof.

Joachim Meyer Translated by Mike Rasmusson at https://wiktenauer.com/wiki/Joachim_Meyer#Sword

It should be obvious to the reader that the opposite foot is forward in Meyer’s description. Which speaks to the hand and foot problem again.

Kendo: Jodan No Kamae

If we look at Kendo, another advantage to the position reveals it’s self; speed. The mechanics of the cut are such that one may strike with blinding speed. Which of course makes it very good for head attacks. Often times, even when the opponent parries, the strike is too fast and they are hit anyway.

Interpretation #2

This interpretation changes the location of the opponent to behind the left shoulder of the subject in the drawing. It is similar to the Left Wing Strike in position, with the opposite leg forward. Performance of the technique would be to step forward with the right/backward leg as one threw the overhead strike. This can be aimed at the opponent for the weapon, but acting non the weapon offers more opportunity for examination Holding your sword back in this position can do a number of things. It can entreat an aggressive opponent to attack at one’s “small door” or left side, in this case or it can intimidate a less confident opponent. The power in the strike coming down can very effectively displace the opponents weapon and break their rhythm enough to allow you to successfully score.

Wrathful Guard

The Wrathful Guard is known as such since the stance has a wrathful bearing, as will be shown. Stand with your left foot forward, hold your sword out from your right shoulder, so that the blade hangs behind you to threaten forward strikes, and mark this well, that all strikes out from the Guard of the Ox can be intercepted from the Wrathful stance, indeed leading from this stance shows unequal bearing from which One can entice onward, whereupon one can move quickly against the other as needed, as is shown by the Figure in illustration E (on the left).

Joachim Meyer Translated by Mike Rasmusson at https://wiktenauer.com/wiki/Joachim_Meyer#Sword

The below Meyer plays as presented by Björn Rüther shows the possibilities in which the guard can be incorporated. Play #4 is one that seems to conform to the text in the Korean Stances:

Tiao 挑

The follow up is called “Tiao Ci”. Ci is the word for attacking with the tip. “Tiao” means to “carry on a the end of a pole”, and his referring to the feeling of “flicking” the tip to either cut or parry with a sheering motion. This is discussed in the introduction a bit, here. This abrupt, upward cut, often done with the short or false edge, is found through out this text. It is still in use today in many systems of swordplay from China. I have translated it as “flick” as that seems the most appropriate for the context.

This follow up does form the outline for a particular sequence or play that is a theme. A powerful downward blood followed by a rising attack or thrust. The downward blow is used as a way to disrupt or move the opponents weapon opening up their center. This strategy is shared by Left Wing Strike. From a downward blow to a thrust to the neck or chest.

Conclusion

The interpretation of such a text is always fraught with difficulty. And at the end of the day, we must admit that much of our work is making educated guesses based on the evidence available to us. The sparse and brief nature of the information presented by Mao Yuan Yi can be decoded in many different way by people of different backgrounds. We do not know if these positions are supposed to be used like guards are in European systems. Or if they are used as simply transitional positions by which to map out your movements.

But, as we explore these texts more, it should become apparent that there is more information here than meets the eye. It does take some digging and some creative thinking. If we look at the three entries we have discussed so far, they can be seen and different expressions and or permutations of the same principle. Namely, raising your sword high and bring it dow is a very useful technique to have for a host of reasons.

Leopard Head Strike

Raise the Cauldron

Left Winig Strike

Drill Strike

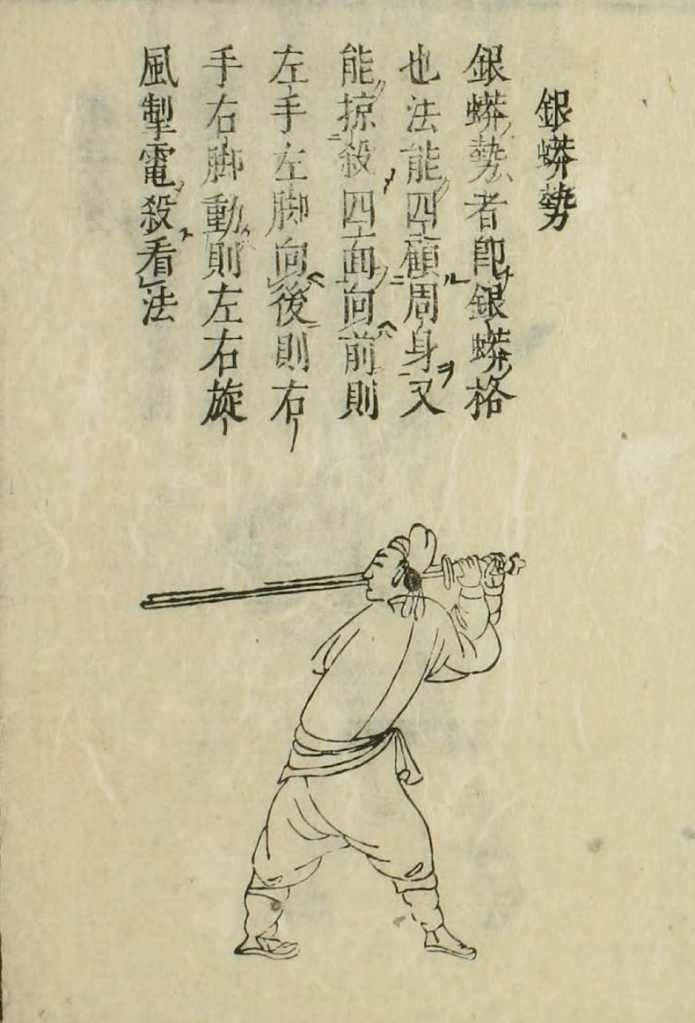

Silver Python Block

Right Wing Strike

This also represents one of only six “high guards” or positions held at head level or above. At least as what is illustrated in the text. As we have stated, the true intent of the author is lost to us. We can only go on our current experience and knowledge. Which is extensive as modern people. We can cross reference systems and martial cultures from places we never would have had access to before. And when we search for this information, it is often very similar, if not identical.

Selected bibliography

- Ma, Mingda馬明達. 無系列Wu Xi Lie. chu ban. ed. Vol. A113-A114, 武學探針Wu Xue Tan Zhen. Taibei Shi: Yi wen chu ban you xian gong si, 2003.

- Mao, Yuanyi茅元億. 武備志Wu Bei Zhi. [China: s.n. ; not before, 1644] Map. Retrieved from the Library of Congress, https://www.loc.gov/item/2004633695/.

- Meyer, Joachim, and Jeffrey L. Forgeng. The art of sword combat: a 1568 German treatise on swordsmanship. London: Frontline Books, 2016.

- Peers, Chris. Men-at-arms Series. Vol. 307, Late Imperial Chinese Armies 1520-1840. London: Osprey, 1997

- Porter, Jonathan. Imperial China, 1350-1900. Lanham: Rowman & Littlefield, 2016.

- Qi, Jiguang戚繼光. Wu Shu Xi Lie武術系列. chu ban. ed. Vol. 6, Ji Xiao Xin Shu.績效新書 Tai bei shi: Wu zhou, 2000min 89.